You get only 2 seconds to convince your visitors about your relevance. If you fail, the visitor clicks the back button and leave your site for good. Here’s how you can stop it.

One of the most frequently asked questions about SEO is: “How to safeguard a site from Future Google updates?”

Indeed, there’s no short answer to this. Google rolls out updates almost daily, making it hard for webmasters to tweak their sites to stay safe. Given the magnitude of major Google updates, staying safe is just a matter of following the SEO best practices rather than fix a site only after it’s affected by a random algorithmic update.

Here are a few guidelines to help you understand what really matters to Google and how to keep your site super safe from any future updates of Google.

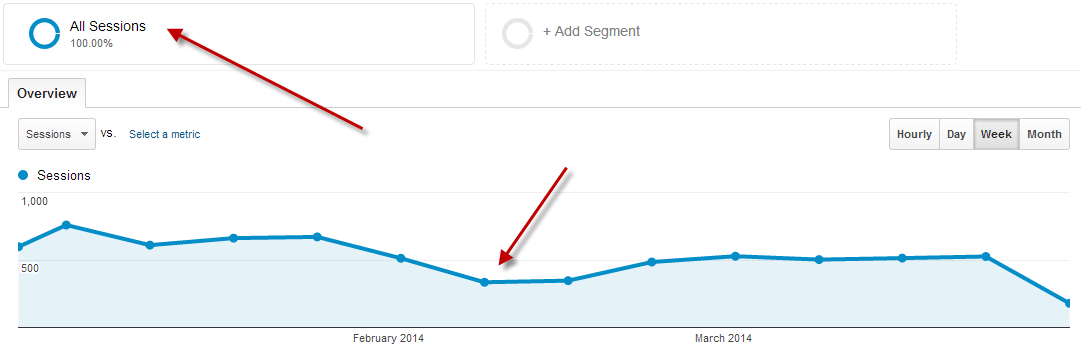

Correlate Traffic Spikes with Updates

When Google releases any algorithmic updates, you may notice fluctuations in traffic to your website. It’s important to pay attention to traffic spikes before and after the Google Updates, and analyze the possible triggers behind the spikes.

For example, if you saw a sharp decline in traffic because of an algorithmic update was rolled out to devalue low-quality content, you should dive deep into the content of your website and see which articles are the worst performers.

Look at the numbers of your articles in Google Analytics and Google Console to see which ones have suffered from the updates. Take appropriate actions to ensure your site is safe from future Google updates.

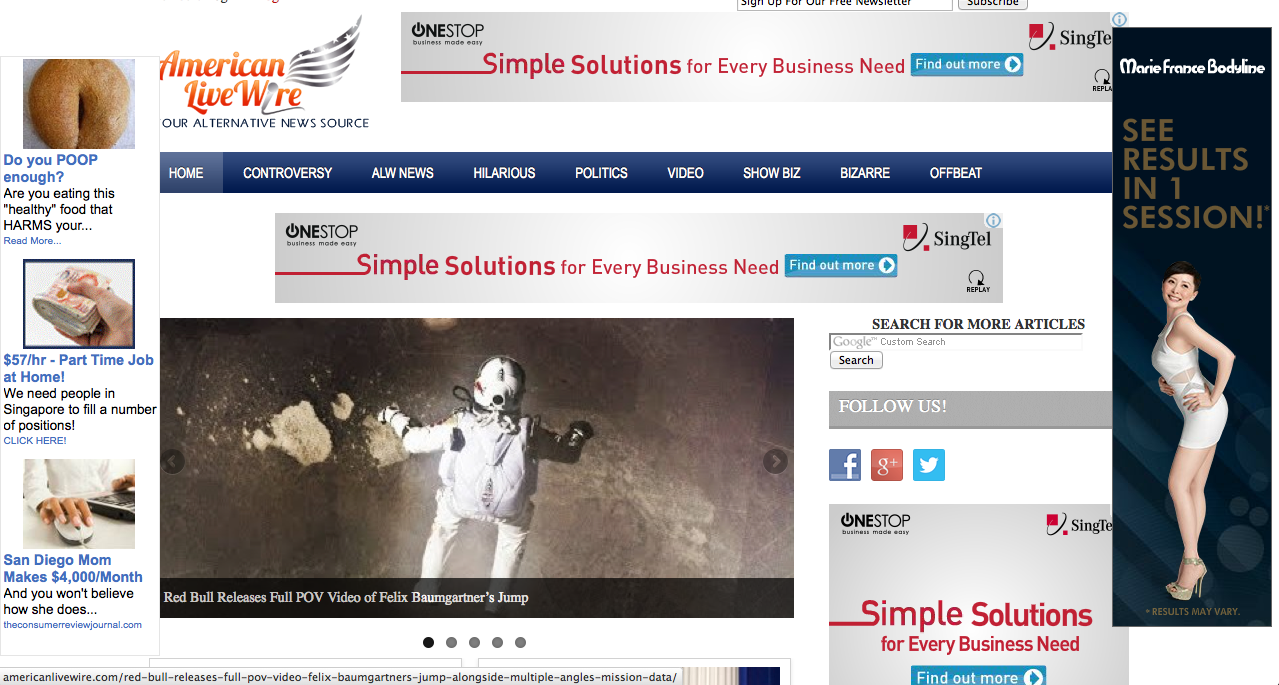

Fix Ad-heavy Pages

The Fred update was essentially targeted sites with little regard for quality control. Many sites with an unreasonable amount of ads with poor user experience were reportedly hit. Many websites also claimed they saw immediate improvement as soon as they removed the ads from their site. This essentially means Google is likely to roll out many more updates focused on user experience moving forward.

Even if your affiliate-heavy site escaped Fred this time, there’s no guarantee it will get away with bad user experience the next time.

Focus on creating a decent user experience by striking an ideal balance between ads and content. Consider the number of ads your blog really needs place them judiciously.

Your visitors don’t like deceptive ads, neither does Google. Avoid placing ads in the middle of the article or putting videos that autoplay.

Beef Up Thin Content

Just because the Fred update was designed to devalue ad-heavy pages doesn’t mean that you should remove all ads from your pages altogether. In fact, this is not even what Google wants you to do. However, what it really wants you to do is keep the ration between content and ads ideal so the overall user experience doesn’t really go too low. Moreover, use an appropriate content auditing tool to assess the content quality on your website and then try to improve the pages that have a lower word count.

What's "thin" content? If you visually look at the content & realize it took ABSOLUTELY NO TIME OR EFFORT to create it, that's thin content!

— Casey Markee (@MediaWyse) February 14, 2014

Please, note that low word count may not always indicate that the page has little utility. The bottom line is the page should adequately answer relevant queries. After all, some topics don’t really need a high word count to answer queries.

There’s really no rocket science – ask yourself if the body content fulfills the promises made in the title of the page.

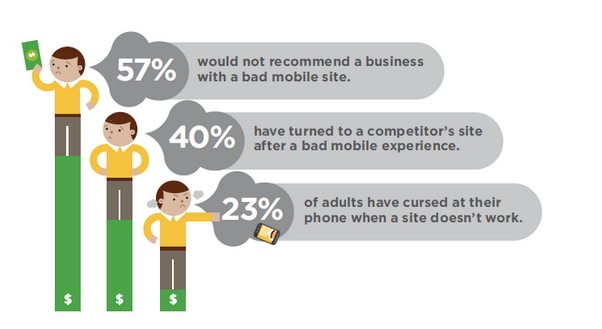

Prioritize Mobile Experience

With the burgeoning adoption of mobile devices, Google has been stressing on offering mobile-friendly experience. It rolled out Mobilegeddon update in order to help mobile-friendly sites rank higher on SERPs. Moreover, it also released an update for handing out Intrusive Interstitial Penalty – a penalty for websites using pop-up ads above the fold on mobile devices.

Essentially, Google has been advocating against following any tactic that would potentially harm user experience on mobile devices.

Google recently analyzed webpages to find 70% of the pages analyzed had poor user experience, with visual content above the fold taking more than 10 seconds to load. This essentially means the bounce rate on those pages would increase up to 113%.

Of course, the best practice says you should make it so your pages load within 3 seconds. To start off, you should take the mobile-friendly test and then work on the recommendations to improve your mobile user experience.

Stay Visible in Local Search

Google has been constantly working on its search engine to improve local search results. After releasing Pigeon update in 2014, Google rolled out another update called “Possum“. The update is designed to help users find nearby businesses. As a result, the physical location of a searcher will play a big role in local search rankings.

This basically means if a searcher is physically closer to your business at the time of using Google Search, you’re more likely to appear at the top of the local search listings.

If your business is more dependent on local traffic, this update can do a world of good for you. However, you still need to take care of a couple of SEO aspects of your website before you can benefit from this update. Here’s what you need to do:

- Create a Google My Business page and categorize your business properly. It helps Google understand your entity for local search purposes.

- Ensure your NAP (name-address-location) is consistent across all local listings. With a more consistent NAP, Google’s job becomes easier.

- Get listed on all local business directories. Google relies on local directories to evaluate your relevance. Make sure you’re listed everywhere that matters.

- Target local keywords to help Google rank your business based on a variety of keywords. Use any popular SEO tools or Google Suggestions to find relevant keywords.

- Spy your competitors to understand the keywords they are using to outrank you. Use rank tracking software to know how far behind you are.

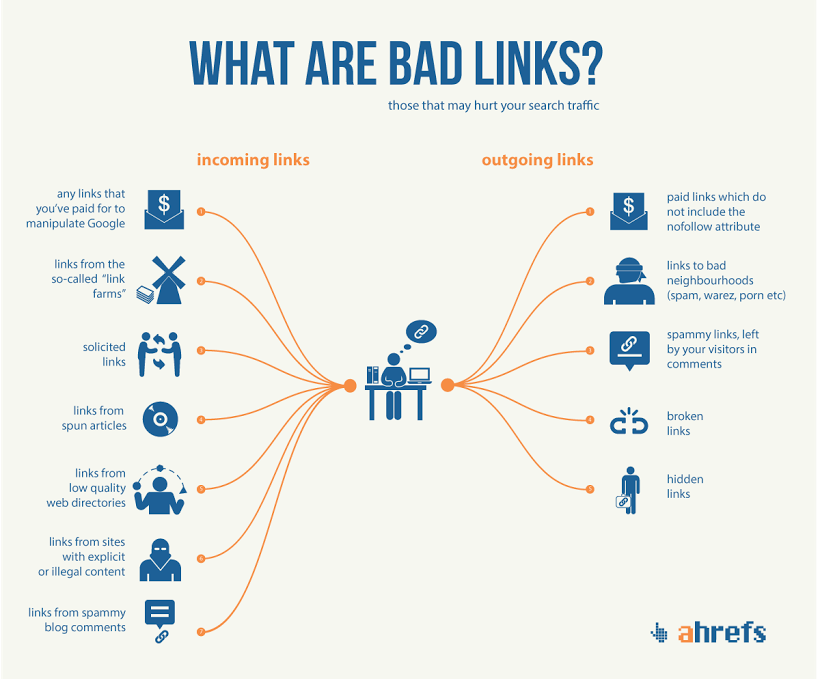

Remove Harmful Links

Google is getting much smarter with their algorithm as it has incorporated artificial intelligence to determine the legitimacy of the backlinks of a website for ranking considerations. Therefore, you are no longer safe with harboring bad links – links from sources that are either irrelevant or carry footprints of manipulation.

The Penguin update rolled out in 2012 unleashed manual penalty on many sites resorting to bad links for ranking manipulation. However, Google has become much smarter since then – the Penguin 4.0 rolled out in late 2016 now devalues sites with bad links, instead of penalizing them.

Either way, you’re better off removing those links after a manual inspection of your backlink profile. If you notice links from irrelevant or poor sources, you should immediately contact them to remove those links.

Alternatively, you can use Google’s Disavow Tool asking Google to ignore links from those sources. After the Fred Update, many people found that links from social bookmarking sites were the culprit behind their sites falling in search rankings.

Final Thoughts

As per a recent tweet from John Muller, Google tends to make improvements to their search algorithm almost on a daily basis. However, not all updates are big enough to cause a noticeable change in your rankings. However, if you continue to follow the SEO best practices – improving content quality, user experience, and link portfolio – you shouldn’t really have to worry about any potential implications the future algorithmic updates Google may release in future.